The power of the pencil

By SpringMath author Dr. Amanda VanDerHeyden and Dr. Paul Muyskens

One question that is often posed to our team, usually from prospective systems, is why we use paper and pencil responding with students. We use paper and pencil with students because we believe it is the more effective format for learning most of the time for most students. Paper and pencil responding is also often easier to use, more consistently available, more engaging, and less expensive.

On the other hand, there are benefits to adults in schools when programs are administered online. Online instruction temporarily reduces the teacher’s task load, and some of the work associated with intervention is “offset” onto the computer from the teacher or even the students themselves, for example, with automated scoring. I think these benefits would be highly valuable to systems if only computer-delivered instruction produced great results. We’ll dive into the evidence on the specific benefits and failings of technology in education in a moment. But let’s finish the loop first on how the adoption decision can get off track.

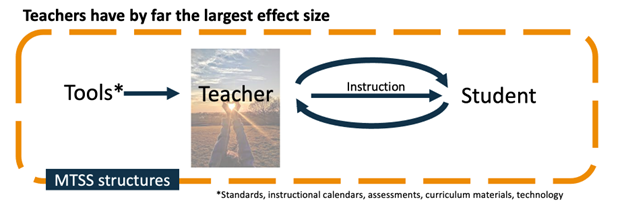

SpringMath emphasizes student benefits first and adult benefits second. We strive to find the sweet spot that delivers the most benefit to the student in a way that is most efficiently and easily used by adults in the system. And for the very specific cases for which computer-based instruction is helpful, we support that, too, whether it unfolds inside SpringMath or comes from an alternative source.

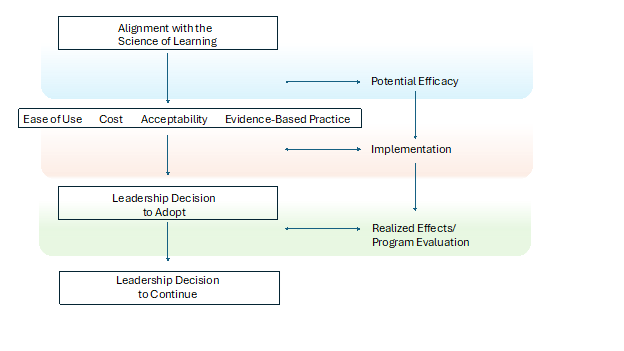

How systems adopt new technologies

In adopting new tools and technologies in classrooms, we believe that many systems apply the wrong decision model. Here is a picture of a three-stage decision model on technology adoption. Many systems make the mistake of turning this into a two-stage decision model that begins by considering the adult benefits first – ease of use, acceptability, and cost to acquire. Leaders also attempt to determine whether the tool represents an evidence-based practice, but this decision is often harder to discern. These are important considerations, no doubt, and are complicated enough to quantify and consider. But we believe the process should actually begin with an understanding of the science of learning or how students actually learn.

A recent paper by Barrett et al. (2024) found that when adopting new programming in school systems, most leaders first considered need, then cost, capacity, and sustainability. Administrators prioritized understanding whether there was a need and whether the program characteristics seemed to represent a good fit with their needs. Program characteristics, as considered by administrators, had to do with the mechanics of use — how often, how many minutes, who had to implement, and so on. Only half of the administrators in this study indicated that they considered whether the tool had research evidence to support its value. We believe a tool should fit a specific need in your system experienced by your students and that you should know before you adopt the tool which outcomes you intend the tool to benefit and what the anticipated cost will be (Barrett, Gadke, & VanDerHeyden, 2020).

In his excellent book Being Mortal, Atul Gwande explores the complexities of aging and death, particularly focusing on how medical care should prioritize a patient's well-being and quality of life at the end of life. Gwande points out that nearly all the marketing associated with elder services is geared toward the adult children who make the placement decision but are not the actual recipients of the services. When selecting these services, adult children may prioritize things like activity schedules or location, where their elderly parents are more concerned with who might sit at their dinner table each evening and whether the food arrives hot and appetizing. Hence, much of the marketing is directed at the decision maker, creating a systemic disconnect when the decision maker is not the direct beneficiary of the decision. It actually creates misaligned contingencies, which we have written about here.

To me, this arrangement is powerfully analogous to what happens in education. The decision makers are usually system leaders who are subject to pressures, variables, and contingencies that compete with decisions that are optimal decisions for students.

Technological tool adoption is a prime example of misaligned decision making. The application of technology in learning has often been poorly designed if one considers the science of how humans learn. For example, in its earliest iteration, technology use in education involved presenting print textbooks in digital format as PDF, which may have lightened backpacks and decluttered classrooms but really did not enable more effective instructional delivery. When one considers that criterion for success — does the technological tool enable more effective instructional delivery in real time — very few tools meet that standard. Often the teachers who enjoy technology make major strides in using technology in their classrooms, but we do not see discernible benefit to students that is uniquely associated with the specific technology (rather than the talents of the teacher who so eagerly embraced that technology). In other words, these teachers are often strong teachers who understand the mechanics of effective instruction and would get great results with their students in the absence of the technology. Technological innovations in education have often returned disappointing results if you look simply at benefit to student learning.

Technology is also often expensive to acquire, can be disrupted, and requires training and support to deliver results. For example, without training, Smart Boards in their earliest iterations became very expensive whiteboards. Correct use of technology remains a difficulty.

Today, the world has evolved to dramatically open internet connectivity and device use. Most children now have a small computer at their fingertips in the form of a smartphone or tablet. Let’s consider the ways in which technology has been demonstrated to benefit student learning.

What works and what doesn't work

| An early synthesis study (Slavin & Lake, 2008) examined core curriculum, computer-assisted instruction, and what was called “instructional process” programs on student math achievement. They found the least benefit for curriculum adoption (this was a near-zero effect). Strong benefit came from instructional process programs (which we might roughly translate as data-driven instruction grounded in the science of how students learn). Moderate effects were found with computer-assisted instruction, which mostly involved computer-based practice opportunities in math. Peterson-Brown et al. (2018) conducted a meta-analysis of the use of touch devices on academic performance with universal student populations, including about 51 studies, half of which targeted math performance. They found moderate effect sizes for the use of touch devices in classrooms and some interesting moderation effects. Not surprisingly, effect sizes were stronger in the studies that used weaker counterfactual arrangements (i.e., business-as-usual control conditions versus a comparable nondigital practice activity). Notably, effects were stronger when the intervention was delivered with individual support from a teacher who provided modeling and guidance to students to respond correctly versus allowing students to complete the intervention independently. McKevett et al. (2019) examined the effect of a web-based app targeting fraction quantity understanding using a multiple baseline design across three participants. Use of the application was supported by an adult. This experimental study found strong gains in comparing fraction quantities and placing fractions on a number line (using SpringMath measures), providing experimental evidence of the benefit of a web-based practice application to improve skill gains. Two later studies, both experimental, found no difference between flashcard practice and computer-delivered practice on mastery of multiplication facts (Kromminga & Codding, 2021; Kromminga & Codding, 2024). |

So what do these data tell us? At this moment, there is no added benefit in using a digital format to deliver math intervention, with the strongest evidence involving the direct experimental comparison of device-delivered flashcard practice with feedback versus reciprocal peer tutoring (very similar to classwide math intervention). Both conditions improved multiplication fact fluency and there was no difference between the treatment conditions. These studies give us optimism that computer-delivered practice opportunities can be as effective as adult-delivered or peer-mediated intervention in classrooms using paper and pencil on highly aligned, proximal measures (e.g., multiplication fact fluency). However, many of these studies included adult support when using the device, which diminishes the perceived efficiency benefit of digitally delivered interventions. The one study that did not include direct adult support (Kromminga & Codding, 2024) targeted a basic fact skill, which is more amenable to digital practice. In summary, the body of research indicates that there is no value add to using digital intervention formats (paper and pencil performs as well), and the efficiency argument for digital delivery is not supported by research as most studies also included direct adult assistance. There is also a body of emerging research showing benefit of students using paper and pencil on academic achievement outcomes. |

Research suggests that solving math problems using paper and pencil can significantly aid retention. The act of physically writing out the steps has been shown to aid in deeper understanding and memory consolidation compared to simply calculating on a screen.

These results are thought to be related to four main factors:

Motor-sensory engagement:

Using your motor system supports working memory by engaging more areas of the brain, leading to better information processing and retention (Marvel, Morgan & Kronemer, 2019; Passolunghi & Lanfranchi, 2012).

Visual representation:

Seeing the problem laid out on paper allows for a clearer visual representation of the steps involved, making it easier to summarize key information and understand what students are being asked to solve. Students who use accurate visual representations are more likely to solve math problems correctly (Boonen, vanWesel, Jolles & van der Schoot, 2014).

Cognitive load management:

Writing out steps can help manage cognitive load by allowing students to break down a problem and focus on one part of the problem at a time. This can help reduce the stress on working memory and thus the mental effort required to solve a problem. Performance on complex learning like math can be significantly impacted by cognitive load during learning (Sweller & Chandler, 1991).

Improved metacognition:

Writing out work forces students to actively think about their strategy and identify potential errors. Metacognition has been shown to be positively correlated with math achievement (Ozsoy, 2011).

Similar findings have been noted in the areas of literacy and written expression. Troia et al. (2020) found that handwriting fluency led to higher-quality writing with fourth- and fifth-grade students even when that writing was composed on a computer. Thus, the process of students writing using paper and pencil is both benefitted by word-level literacy skills and shows benefit to other academic outcomes. Much of the research suggesting benefit of handwriting to acquisition and immediate effortless recall comes from studies examining note-taking with later test performance involving comprehension and recall of the content taught among young adults in college settings. Interestingly, a large international survey of college-age students found that students indicated a preference for paper and pencil notetaking, specifically citing the benefit of writing by hand mathematic and scientific formulas (Vincent et al., 2016).

What findings have the creators of online math intervention programs published?

The more comprehensive digital solutions in math intervention have generally reported moderate effects on vendor-conducted or sponsored research studies; however, these studies were often poorly done with the biggest weakness being a lack of treatment integrity in the actual use of the digital tool. Specifically, while moderate effects are often reported, only the students who completed the required sessions in the digital environment are included in the analysis, and it is not uncommon to have a majority of the students in the study not complete the required number of sessions. If you include all students in the analysis, the effect sizes would be unremarkable.

A closer look at web-based intervention programs that offer skills practice

(e.g., IXL) and instruction (e.g., Dreambox) show that while they may lessen the intervention burden, web-based interventions that do not involve the teacher as an active ingredient in their mechanisms of action have produced underwhelming effects. For example, a study by the Center for Education Policy Research (2016) found a 2% gain on the Measures of Academic Progress (MAP) for students who used Dreambox, while a study published by IXL learning (2019) found a 1 RIT score point gain on the MAP for students using IXL in a quasi-experimental design. IReady, (2017-2018) reported stronger effects in a quasi-experimental design, but there were methodological limitations of that study, including nonequivalent comparison groups at baseline and no dosage or integrity data provided in the study, which also was not peer reviewed. A thoughtful analysis of these challenges is provided in Education Next's article on the “5 Percent Problem.”

Conclusion

When we talk about online delivery of instruction and assessment, especially in math, we are often appealing to the adult decision makers in the room, but that format of delivery is not associated with better results. It is generally as costly if not more so and produces no better (and sometimes worse) results for students.

References

-

Barrett, C. A., Gadke, D., & VanDerHeyden, A. M. (2020). At what cost? Introduction to the special issue return on investment for academic and behavioral assessment and intervention. School Psychology Review, 49(1), 1-12.

-

Barrett, C. A., Sleesman, D. J., & Amin, T. A. (2024). Mixed methods examination of decision-making during program exploration and implementation in schools. Prevention Science, 25(4), 459-469. [https://doi.org/10.1007/s11121-024-01655-0](https://doi.org/10.1007/s11121-024-01655-0)

-

Boonen, A., Van Wesel, F., Jolles, J., & Van der Schoot, M. (2014). The role of visual representation type, spatial ability, and reading comprehension in word problem solving: An item-level analysis in elementary school children. International Journal of Educational Research, 68, 15-26. [https://doi.org/10.1016/j.ijer.2014.08.001](https://doi.org/10.1016/j.ijer.2014.08.001)

-

Center for Education Policy Research, Harvard University (2016). Dreambox learning achievement growth in the Howard County Public School System and Rocketship Education. http://go.dreambox.com/rs/715-ORW-647/images/ef-2016-Harvard_key_findings.pdf

-

Gwande, A. (2014). Being mortal: Medicine and what matters in the end. Metropolitan Books.

-

IReady (2017-2018). Research on Program Impact of Ready Mathematics Blended Core Curriculum. https://www.curriculumassociates.com/-/media/mainsite/files/ready/ready-math-blended-essa-2-research-brief-ay-2017-2018.pdf

-

IXL Learning (2019). The Impact of IXL Math and IXL ELA on Student Achievement in Grades Pre-K to 12. https://www.ixl.com/research/ESSA-Research-Report.pdf

-

Kromminga, K. R., & Codding, R. S. (2021). Direct and indirect effects of literacy skills and writing fluency on writing quality across three genres. Education Sciences, 11(5), 227-235.

-

Kromminga, K. R., & Codding, R. S. (2024). The impact of intervention modality on students' multiplication fact fluency. Psychology in the Schools, 61(1), 329–351. https://doi.org/10.1002/pits.23054

-

Lenard, M. A., & Rhea, A. (2018). Adaptive Math and Student Achievement: Evidence from a Randomized Controlled Trial of DreamBox Learning. Paper presented at the 2019 Society for Research on Educational Effectiveness meeting.

-

McKevett, N. M., Kromminga, K. R., Ruedy, A., Roesslein, R., Running, K. and Codding, R. S. (2020). The effects of Motion Math: Bounce on students’ fraction knowledge. Learning Disabilities Research & Practice, 35, 25-35. https://doi.org/10.1111/ldrp.12211

-

Oc, Y., & Hassen, H. (2024). Comparing The Effectiveness Of Multiple-Answer And Single-Answer Multiple-Choice Questions In Assessing Student Learning. Marketing Education Review, 1–14. https://doi.org/10.1080/10528008.2024.2417106

-

Ozsoy, G. (2011). An investigation of the relationship between metacognition and mathematics achievement. Asia Pacific Education Review, 12(2), 227-235. [https://doi.org/10.1007/s12564-010-9129-6](https://doi.org/10.1007/s12564-010-9129-6)

-

Passolunghi, M. C., & Lanfranchi, S. (2012). Domain-specific and domain-general precursors of mathematical achievement: A longitudinal study from kindergarten to first grade. The British Journal of Educational Psychology, 82(1), 42-63. [https://doi.org/10.1111/j.2044-8279.2011.02039.x](https://doi.org/10.1111/j.2044-8279.2011.02039.x)

-

Petersen-Brown, S. M., Henze, E. E. C., Klingbeil, D. A., Reynolds, J. L., Weber, R. C., Codding, R. S. (2019). The use of touch devices for enhancing academic achievement: A meta-analysis. Psychology in the Schools. 56, 1187–1206. https://doi.org/10.1002/pits.22225

-

Roberts, B. R., & Wammes, J. D. (2021). Drawing and memory: Using visual production to alleviate concreteness effects. Psychonomic Bulletin & Review, 28(2), 259-267. [https://doi.org/10.3758/s13423-020-01804-w](https://doi.org/10.3758/s13423-020-01804-w)

-

Slavin, R. E., & Lake, C. (2008). Effective programs in elementary mathematics: A best-evidence synthesis. Review of Educational Research, 78(3), 427-515. [https://doi.org/10.3102/0034654308317473](https://doi.org/10.3102/0034654308317473)

-

Sweller, J., & Chandler, P. (1991). Evidence for cognitive load theory. Cognition and Instruction, 8(4), 351-362. [https://doi.org/10.1207/s1532690xci0804_5](https://doi.org/10.1207/s1532690xci0804_5)

-

Troia, G. A., Brehmer, J. S., Glause, K., Reichmuth, H. L., & Lawrence, F. (2020). Direct and indirect effects of literacy skills and writing fluency on writing quality across three genres. Education Sciences, 10(10), 227-235. [https://doi.org/10.3390/educsci10100227](https://doi.org/10.3390/educsci10100227)

-

Vincent, J. (2016). Students’ use of paper and pen versus digital media in university environments for writing and reading – a cross-cultural exploration. Journal of Print and Media Technology Research, 5(2), 97-106.